If you could read my mind love

What a tale my thoughts would tell

Imagine being able to know what someone was thinking without them having to say anything. Better yet, what if you could respond to them without having to use any words, just thoughts? Telepathy is a common trope in fantasy and science fiction and I want to find out if it’s possible. Can we create brain-to-brain communication without any invasive surgery?

Earlier this year I started going deep into the world of Brain-Computer Interfaces. This incredible technology may be the secret to many futuristic—and ethically questionable—realities such as mind-reading, brain-controlled prosthetics, and even telepathy. Beyond science fiction dreams, countless medical improvements will also exist for patients with Parkinson’s, paralysis, or other neurological diseases.

We’ll need to cover a few different things to understand how this might one day be possible.

- How does the brain function?

- How do BCIs work?

- How can we send signals back into the brain?

By understanding what technology is available to us, we can start to piece together our own little telepathy helmet.

How the brain works

Our brain is primarily made up of billions of cells called neurons. These cells are responsible for creating signals sent to other areas in the brain or body to trigger a response like blinking, beating our hearts, feeling itchy, or tasting something.

A neuron has 3 main parts (bear with me through the technicalities):

- The cell body → the heart of the cell, this just keeps it alive. It contains the genetic material of the cell.

- Dendrites → these are little sensors that receive signals from other nerve cells. They stick out of the cell body like branches or antennae waiting to receive signals from other neurons.

- The axon → this is the pathway into other areas of the body that the neuron will send signals to other cells. this is like the broadcast microphone.

Neurons are the basis for our ability to do anything. Through our nervous system, they connect to virtually every area of our body and are tightly interconnected within our brains. They are what make us move, allow us to think, and create and store memories.

The connection point between one neuron and another is called the synapse. This is where neurons communicate through electrical signals and chemicals known as neurotransmitters. When a nerve pulse is sent to a cell, specific channels in the cell membrane open, causing sodium (Na+) cells to enter the cell body and change its polarity to positive. At a certain threshold, the neuron fires an action potential, sending an electrical signal down the axon into the next cell, repeating until it reaches the end of the line and we take action or feel something. With EEGs, we are measuring post-synaptic potentials. These initial electrical impulses, created in response to stimuli, may lead to an action potential firing.

Those electrical signals that are created are important for our purposes because using EEG technology (covered later), we’re actually able to read those electrical signals and attempt to interpret them. Individual signals are too small to measure, like small bubbles rising to the surface of the water but fading away instantly. Instead, we look for groups of neurons that fire simultaneously, like a boiling pot of water, creating a large enough signal for us to be able to track and interpret from outside the brain, through layers of skin and bone.

Whenever anything happens in the body, there are neural responses that we can see or track, though they might be small and hard to see. This is what researchers are currently studying as they try to understand what happens in our brains when we are communicating. Can we tell what words or images someone is thinking of just based on these brain signals?

The brain is composed of multiple different areas, each of which we use to do different things. For example, we use the frontal lobe for our motor control (movement), problem-solving, and even speaking. We use the occipital lobe, at the back of the brain, for seeing and interpreting visual images. If we wanted to know what someone is thinking or seeing, we probably need to tap into their visual cortex. This is important to know as we get into the specific placement of electrodes in an EEG depending on what we are trying to measure.

Getting into the machinery

Imagine having a device like a FitBit or an Apple Watch, but for your brain. It would read your brain activity, let you know when you’ve hit certain focus or movement goals, and even give you a little buzz if something needs your attention. This is a simple example of a Brain-Computer Interface or a BCI (you may also hear the term Brain Machine Interface). BCIs are a category of wearable devices that connect your brain to a computer or machine using a variety of different technologies. They come in all shapes and sizes and may prove to be a key to achieving inception.

While there are all sorts of BCIs ranging in invasiveness (non-invasive, semi-invasive, invasive) and measurement technology (EEG, ECoG, fMRI, fNIRS), we will focus on EEGs. This technology is the most consumer-friendly – it’s not too expensive, portable, and non-invasive.

Not all BCIs are made equal. They can either be non-invasive (external to the body), invasive (in the brain), or somewhere in between, ranging in cost, portability, and effectiveness depending on the form factor. These 3 criteria create a tradeoff as we try to balance resolution.

Depending on the type of BCI we are using, we can collect different types of information with different levels of fidelity. In BCI-land this is referred to as resolution. We have two types: spatial and temporal resolution.

Spatial resolution refers to our ability to pinpoint where in the brain the information is coming from. The lower the fidelity, the more broad/vague the reading. Imagine there was a loud bang outside and I told you the sound came from “over there” and pointed west. That’s low spatial resolution. If instead, I said the sound came “from three blocks over, in the red low-rise building between the church and the corner store” that would be a high spatial resolution. The highest might be me telling you which room or unit in the apartment the sound came from. As we will see, there’s a cost associated with high spatial resolution.

Temporal resolution refers to how quickly we are able to get the data out. The higher the resolution, the faster the reading, approaching or hitting real-time data reporting. Depending on the needs of the device, this has its own tradeoffs. High temporal resolution is usually required as an input system (controlling an external device or prosthetic) or providing feedback (something being triggered by a brain state). We can use low resolution when time is less important, such as with research studies or recordings we will analyze asynchronously.

The tradeoff we need to make is the technology required to get such data. There may be delays or invasiveness required or a lack of spatial or temporal resolution depending on what we are trying to do.

EEGs are considered to have a high temporal resolution but a low spatial resolution. We are unable to pinpoint specific areas of the brain that are lighting up with the same precision as a more invasive BCI. This makes the data for specific studies challenging to interpret. To try to compensate for this, we can use machine learning (ML) and some preprocessing of the data to try to draw clearer patterns.

How they work

BCIs are used primarily for two tasks: reading and controlling the brain. These words might seem intense or have a lot of baggage associated with them, so let’s unpack them a bit.

Reading your brain

When I say reading from the brain, unfortunately (or maybe fortunately depending on who you are) I don’t mean mind-reading. Without getting too philosophical, let’s start by separating the mind from the brain. The brain is the body’s organ that we already discussed. The mind is an emergent, self-referential concept. Using BCIs we are able to read physiological data from the brain such as blood flow or electrical activity.

Let’s walk through the standard BCI flow.

- Signal Acquisition → This is where we pull data from the brain.

- Signal Processing → This is where we interpret the data from the brain.

- Signal Use → We can use the data now!

Signal Acquisition

Electrical brain signals are produced in response to a stimulus or impulse. By using EEGs, we’re able to detect and record this to try to understand them.

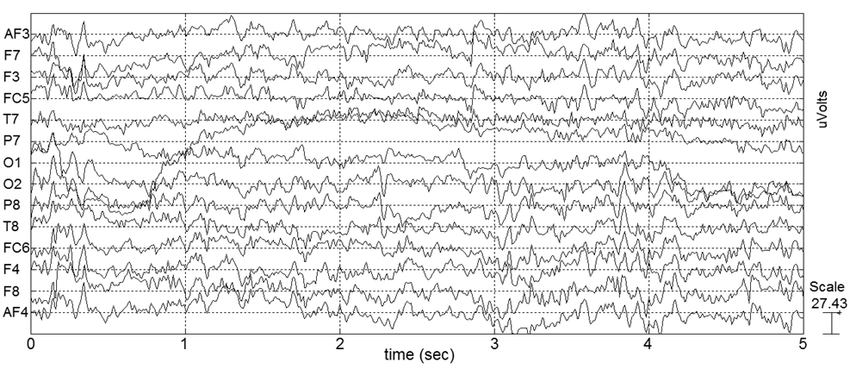

When using an EEG, a series of electrodes are placed on the user’s scalp. EEGs range in number and placement of electrodes, with increasing fidelity (and cost) for a higher number of electrodes. Most EEGs follow the standardized pattern of electrode placement known as the 10/20 system. This system distributes electrodes either 10% or 20% of the skull’s length/width. As you can see in the diagram below, electrodes are labelled based on the zone in the brain such F for the frontal lobe an O for the occipital lobe.

Placement is important because we want to make sure we capture ample data in the correct brain region. Each electrode we place represents a different channel in the output, allowing us to filter out channels we might not need. We may choose to focus on specific channels based on our needs and the expected area of brain activity, even changing the physical device if we are targeting a specific area.

For example, NextMind was a BCI that let users control their computers with their thoughts. Because they wanted to understand what images the user was seeing, they needed to tap into the visual cortex. The hardware placed multiple electrodes on the back of the user’s head, allowing them to focus their data capture on the visual cortex specifically.

An EEG works by measuring the voltage difference between the electrodes. It starts by recording from the electrodes simultaneously and outputs voltage variations over time. The output usually shows standard brain activity in waves as well as brain activity based on the state we are in.

There are five different types of brainwaves with different frequencies associated with them.

- alpha (8-12hz) = at rest, passive attention, relaxed

- beta (12 – 35 hz) = active mind, anxiety

- delta (0.5 – 4Hz) = deep sleep

- theta (4-8hz) = drowsiness, deep relaxation, dreaming

- gamma (30hz+) = problem-solving, concentration, learning, deep thinking

For example, being asleep will usually produce delta brain waves, which are critical for helping us feel rejuvenated and getting deep sleep. If we are at rest our brain will produce alpha waves.

The EEG output is a combined waveform of the different frequencies of waves. Because the EEG is tracking thousands of brainwaves over longer periods, it can be hard to isolate responses to specific events we are looking for. Event-related potentials (ERPs) directly result from a specific external event. By conducting multiple tests, averaging, and then processing the data, we are able to identify specific ERPs within the EEG data.

Signal Processing

We need to detect and process the data and separate any noise or bias that exists in it. Less invasive methods are harder to read as they are more-sensitive/more biased/more noisy compared to invasive. This noise can be things like electrical signals (EEG/ECoG) or blood flow (fMRI). Noise also comes from bodily movements (voluntary and involuntary) → there are hundreds of things happening at once even while you’re just trying to focus on something like reading! Additionally, signals are dampened by things like your skull making it harder to pinpoint specific areas we are looking for.

This is why we need to try to amplify and process the data. Cleaning up data is still an ongoing field of development, but there are generally accepted approaches to pre-processing.

To start, it’s possible one of your channels (data from a single electrode) is no good. Maybe it was malfunctioning or it lost its placement during the test. You’ll want to exclude this so it doesn’t muddy the rest of your data.

From there you might filter out any frequencies you aren’t going to pay attention to. For example, you might use a low-pass filter to filter out high frequencies, a high-pass filter to filter out low frequencies, or a band-pass filter to leave only a certain band of frequencies remaining. This can be helpful for removing electrical noise or other frequencies it happened to pick up.

We might make a handful of other modifications to clean up the data, but the final one is feature extraction. After cleaning up the data and potentially enhancing the signals, we want to identify and extract certain patterns or features that we can use to better understand the brain state the user is in. These features are also used to classify specific actions a person might be taking so we can begin to interpret the data and take the next step.

Right now this is high level, we will dive deeper as I attempt to do these things myself in the future!

Signal training with Machine Learning

Even when we have our data, it’s not as clean as a simple if-then statement as the brain (and its signals) are complex and noisy.

To predict intent, our system needs to be trained on what brains look like when they are trying to do our target action. At a high level, the way we use brain data is by attempting to understand what the brain is trying to do and then taking action. We do this by comparing the signals we are receiving with existing brain data for the desired activity and attempting to pattern-match.

Let’s say we want to know what it looks like in the brain when someone is trying to move their left arm. We built them a prosthetic arm and now want to be able to provide them with specific control. We need to know what their brain activity looks like when they are trying to control it so we can properly map the software: when Steven thinks like this, move the arm like this. The tricky thing is every brain is slightly different, so we can’t just have a master model that says “everyone trying to move their arm will look like this”. There are similarities between brains but not enough to be the case, brain signals are more like fingerprints. Depending on our use case, we might need to specifically train our model with the actual users of the system.

Signal Use

Once we have the data and our interpretation, we are able to use it for something. Maybe we use it to control an external device like a prosthetic or a cursor on a screen.

Some common uses of brain-reading BCIs

- We can use EEG headbands to read our brainwaves to better understand our sleep and focus state. They use this data in their meditation apps to help you focus better and stimulate better sleep. Right now they appear to be read-only.

- Kernel headsets (fNIRS) are used in many research studies to understand how different chemical substances impact the brain

- Interact with a keyboard/UI to communicate

- Control prosthetic limbs

Or maybe we take action in the brain based on what we are seeing. This is where stage 2 comes in – brain control.

Controlling your brain

Remember, this is not mind control. This is brain control.

The key here is inserting feedback back into the brain in an attempt to create a response. Depending on the use case, we might use the same technology or something external.

We can use specific stimulation to the brain as part of our BCI to encourage different types of behaviour. Here are a few interesting ones:

- Seizure suppression → Neuropace is developing technology using BCIs to help reduce or eliminate the impact of epilepsy on their patients. When the system starts to detect brain patterns that indicate a seizure is imminent, it creates electrical signals in the brain through RNS (Responsive Neurostimulation) to reduce the severity of the seizure.

- Improved learning → Though not done with a BCI, research has started happening to see if electrical stimulation might help with learning through a technique known as transcranial electrical stimulation.

- Recreate feelings → Controlling a prosthetic device can be uncanny as you have no sensation of touch as you try to navigate the device in the world. Thanks to BCIs, we are starting to see experiments using haptic feedback to create tactile sensations for patients so they are better able to interact with the world. By placing electrodes in specific areas in the brain, we can recreate physical feelings as the user interacts with the world. this is still early days as this test was done with a single subject.

Making telepathy real

So how far are we from reading people’s minds and communicating?

Let’s walk through some recent developments in this space that can point us in the right direction.

Turning brain activity into speech patterns @ Meta

Researchers at Meta have been trying to decode brain activity into actual speech. Luckily for them, they were able to train a model on ~150 hours of volunteers listening to audiobooks. Taking advantage of a model their team developed back in 2020, they attempted to find patterns in brain activities based on what testers were hearing. From there, they could decode activity in the brain into sentences from the audiobook. Their final test: based on a recording of brain activity, predict what audio clip you actually heard! This lays a really exciting foundation for future development to be able to decode specific brain recordings and enable communication for people who can’t speak as well as potential telepathy.

Recreating images from the brain

Researchers from the National University of Singapore, Stanford, and the Chinese University of Hong Kong have created a new way to recreate images that the brain sees. In their experiments, the subjects start by looking at a specific image. While this is happening, researchers capture brain activity using fMRI technology. Using their diffusion model, they are able to recreate images with mostly correct semantic features, meaning colours, shapes, and objects that the subject saw. While this is happening in a closed environment, it’s exciting to think we might be able to recreate images based solely on brain activity. As more of these experiments happen, the more training data we’ll have for the future!

Predicting words you’re thinking of

Researchers at CalTech conducted experiments to match words in your mind from audio cues (or thinking about them!) to understand what word you’re thinking of. It worked like this: first, you read every word from a list to calibrate the model. Once your brain patterns are mapped, you focus on one of the words in the list. From there, researchers were able to match brain patterns to predict the word. What was cool was seeing how they were able to see which neurons were activated for specific words. And they were able to train the model with only 24 seconds of training data. This is obviously a small study, but again an early indication of what we may be capable of. One note that researcher Sarah Wandelt made clear was that they cannot read thoughts, only the words you’re explicitly focused on.

Sending messages into the brain

Very early trials of “brain-to-brain” communication have been happening where binary thoughts (yes/no) can be delivered to another brain. The recipient gets magnetic pulses (I’m unclear on what they feel in this instance) in a morse-code-like fashion that represents yes or no. This is still very primitive but paves the way for future improvements and telepathy.

What’s next?

It feels like we have a lot of building blocks necessary for telepathy. As we can see, most of the exciting progress that is happening is in the decoding space, developing AI models that can better interpret brain waves. Yes, there is value in focusing on new non-invasive ways to capture high-quality brain data, but what I’m most excited for is using that data in new creative ways.

I hope you learned something new about this technology and are excited, and maybe a bit terrified, of our connected future.